Always-on intelligence below 1μW

the missing layer in the compute infrastructure

Silicon-validated processor • Pre-orders of evaluation kits available • Silicon-calibrated SDK for rapid benchmarking

Our neuromorphic processor operates directly on sensor data through a fully analog physical neural network, enabling continuous intelligence before digital processing.

By removing the discontinuity between the physical and digital world, it enables truly always-on perception unlocking a new generation of sensing-driven intelligent systems.

We begin with audio. We scale across every sensor.

VISION

A NEW CLASS

of intelligent sensors

Our proprietary processor combines in-memory computing with the physical implementation

of spiking neurons and synapses (neuromorphic computing) using analog circuits.

This approach enables us to implement full networks in hardware, with the natural ability to process temporal information in real time, and with extremely low energy consumption.

Analog-to-information ™

A new compute layer

All sensors are inherently analog. They continuously generate temporal signals: sound and voice, vibrations, motion, biosignals such as heart rate or EMG.

Digital scaling improves peak compute, but always-on intelligence remains constrained by a structural energy floor.

Optimization techniques, including in-memory accelerators, mixed-signal spiking implementations, quantization or pruning, improve efficiency, but they do not eliminate the fundamental always-on power overhead of digital and mixed-signal architectures.

Neuronova operates below this digital energy floor.

We remove the always-on perception pipeline from the central SoC and replace it with a sub-1 µW analog intelligent front-end, processing temporal information directly at the source and waking digital systems only when meaningful events occur.

This is Analog-to-Information™: the missing compute layer purpose-built for sensors and essential for scalable always-on intelligence everywhere.

Our proprietary processor combines

in-memory computing with the physical implementation

of spiking neurons and synapses (neuromorphic computing) using analog circuits.

This approach enables us to implement full networks in hardware, with the natural ability to process temporal information in real time, and with extremely low energy consumption.

You can really do more

with less

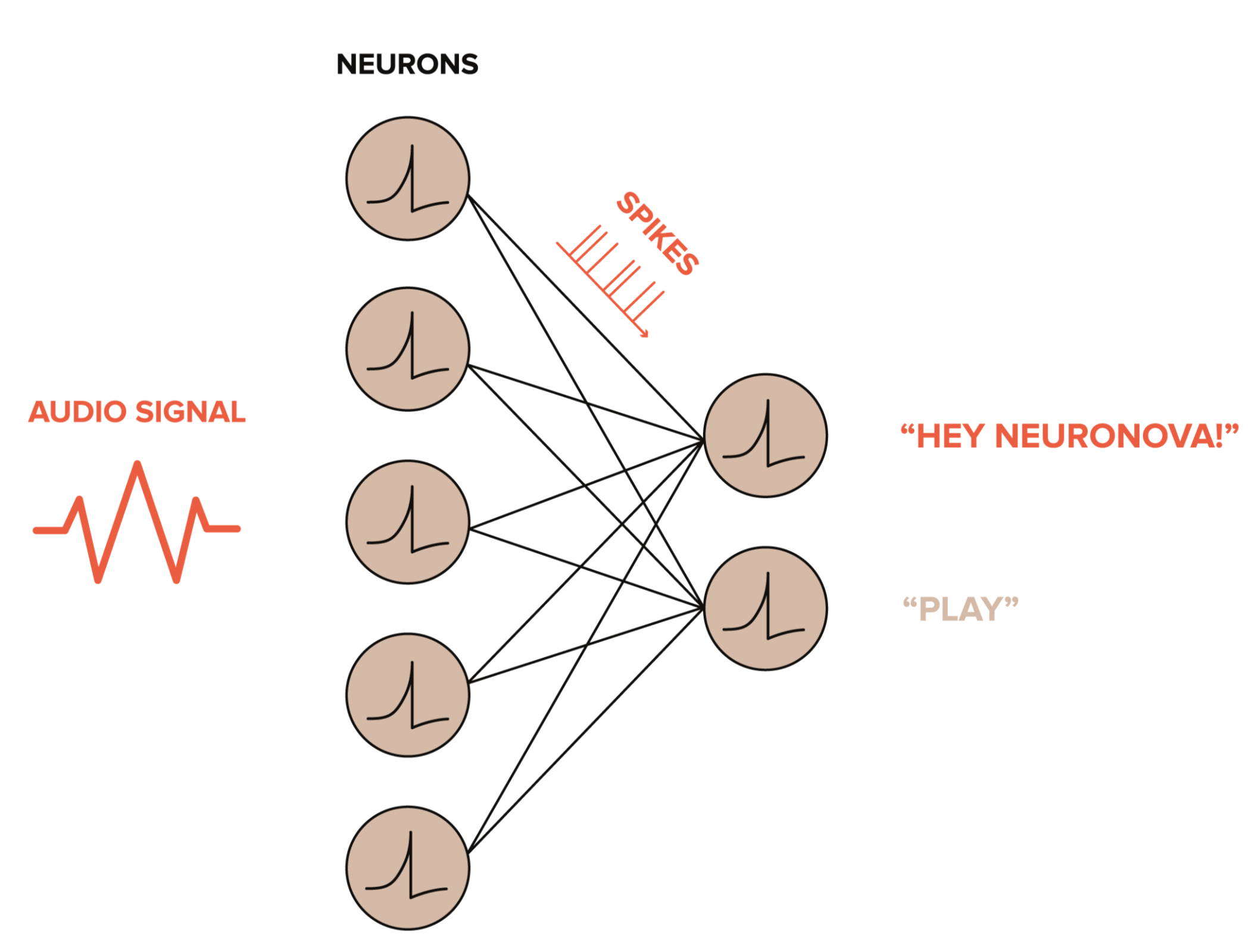

Artificial neural networks running on conventional digital hardware require hundreds of thousands of parameters to handle temporal tasks, such as keyword recognition ("Hey Neuronova!").

Our networks achieve the same accuracy while consuming up to 1000x less power and usingonly a few hundred parameters.

A fully analog pipeline

Future sensors will be intelligent and programmable, with adaptive front ends that can offset sensor drift and non-ideality but also solve complex tasks without the need of a micro-controller. We are working on sensor integration to bring our chip in the package of every sensor.

.png)

Towards full sensor integration

Future sensors will be intelligent and programmable, with adaptive front ends that can offset sensor drift and non-ideality but also solve complex tasks without the need for a microcontroller. We are working on sensor integration to

bring ourchip in the package of every sensor.

A fully event-driven pipeline

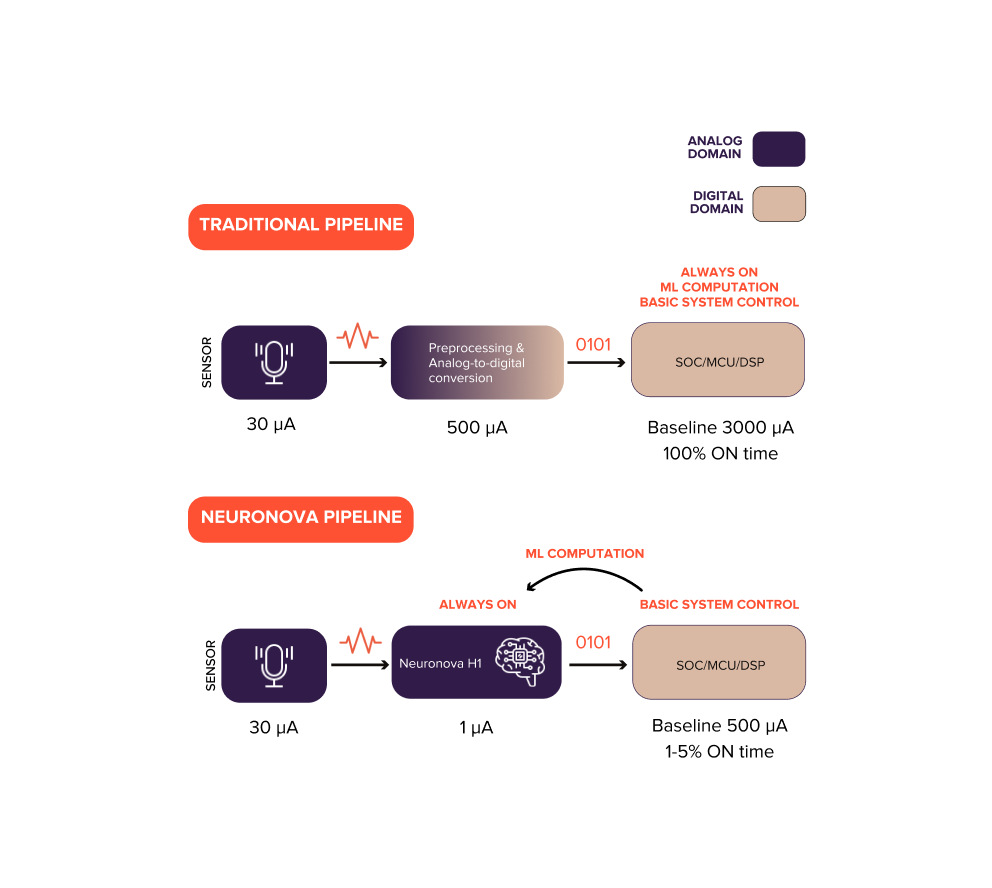

Traditional architectures digitize raw sensor signals and keep the ADC & MCU/DSP active for preprocessing and inference, creating a fixed always-on power overhead.

With Analog-to-Information™, Neuronova processes temporal signals directly in the analog domain and transmits only meaningful events or compact digital features to the central processor. Always-on inference runs at ~1 µW, waking digital systems only when necessary.

Integration remains simple: the processor connects through standard SPI/GPIO interfaces and fits into existing system architectures like any other component.

Native temporal neural networks

Conventional digital neural networks for temporal tasks (e.g., wake word detection such as “Hey Neuronova!”) typically require on the order of ~100,000 parameters and continuous digital activity. Our analog neuromorphic architecture achieves the same accuracy with ~1,000 parameters, not through model compression, but by using a fundamentally different form of computation in the analog and time domain. In digital-equivalent terms, this corresponds to 145 TOPS/W efficiency for temporal inference.

This represents a fundamental shift in how neural networks are physically realized. This is not model compression. It is a different computational substrate.

Intelligence inside the sensor

Today, Neuronova integrates as a discrete ultra-low-power front-end, removing the always-on burden from the central SoC. The natural evolution is System-in-Package (SiP), where the processor is co-packaged with the sensor into a single intelligent module.

By embedding intelligence at the sensing layer, sensors evolve from passive, commoditized components into differentiated, value-generating systems, processing temporal information locally and transmitting events instead of raw data.

This foundational compute layer enables scalable intelligence for the trillion-sensor economy.

Rewriting the intelligence–energy equation

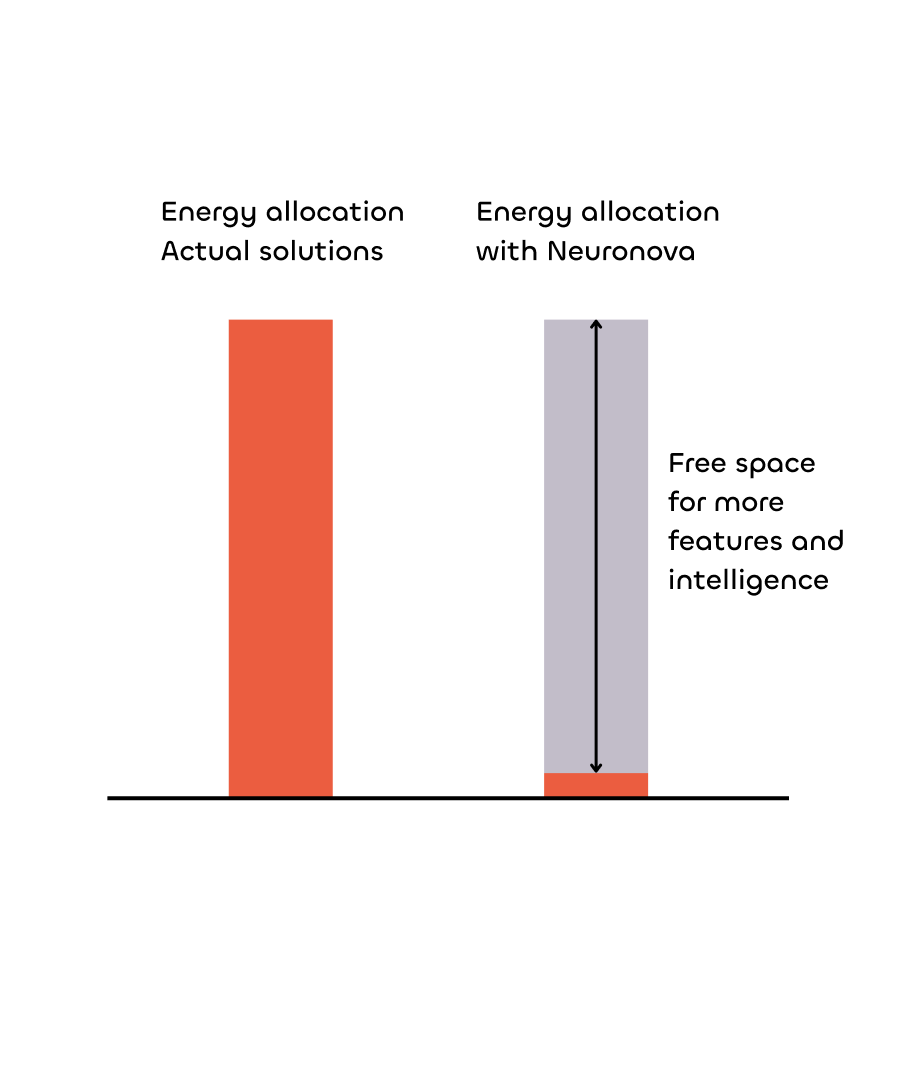

Every device operates within a fixed energy envelope.

In conventional digital architectures, always-on intelligence consumes most of that budget, forcing a trade-off between functionality and battery life.

Analog-to-Information™ minimizes the always-on baseline by embedding intelligence directly in the analog domain, below the digital energy floor.

Peak compute remains unchanged.

What changes is the baseline.

This is not only about reducing power.

It is about freeing energy for more intelligence within the same battery budget.

More features. Same battery.

You can really do more with less

Conventional digital neural networks for temporal tasks (e.g. wake word detection “Hey Neuronova!”) typically require ~100,000 parameters. Our analog neuromorphic approach achieves the same accuracy with only ~1000 parameters, not by compressing the model, but by using a fundamentally different form of computation in the analog and time domain. This is a true paradigm shift in how neural networks are implemented.

Towards full sensor integration

Future sensors will be intelligent and programmable, with adaptive front ends that can offset sensor drift and non-ideality but also solve complex tasks without the need of a micro-controller. We are working on sensor integration to bring our chip in the package of every sensor.

A fully analog pipeline

Future sensors will be intelligent and programmable, with adaptive front ends that can offset sensor drift and non-ideality but also solve complex tasks without the need of a micro-controller. We are working on sensor integration to bring our chip in the package of every sensor.

.png)

doing more with less

TECHNOLOGY

H1

Our most advanced processor to date, a fully analog chip for Spiking Neural Networks on the EDGE. Fabricated, packaged and measured on silicon, H1 is a neuromorphic AI chip designed for always-on inference in battery-powered devices.

It implements physical neural networks in hardware and operates below the digital energy floor, enabling event-driven edge AI at sub-microwatt power levels.

Reprogrammable analog neurons and synapses with a configurable analog front-end

Ready-to-use silicon-calibrated network for typical use cases

Training and deployment via API

Standard digital output (SPI / GPIO interrupt)

3

Analog

Cores

<2x2mm

Chip

Size

180nm

Standard CMOS

Process

<1μW

Always-on inference

power

Standard system integration:

SPI & GPIO available

Known Good Die (KGD) & System-in-package options on request

Commercial temperature range

WLBGA package

Siicon-calibrated SDK

PyTorch-compatible for standard software workflow

Power & accuracy calibrated on real silicon

Enables rapid benchmarking and hardware-aware optimization

USE CASES

Always-on audio intelligence

We start from audio, the most demanding always-on sensing domain, to build a new front-end intelligence layer for wearables and smart devices. Audio is becoming the primary interface to technology, from simple commands to LLM-driven interactions, yet the always-on bridge between the physical and digital worlds is still missing. Neuronova fills that gap.

Core voice building blocks

Wake word detection

An ultra-low-power, always-listening front-end that verifies the wake word directly next to the microphone. The SoC and DSP remain asleep until a validated interaction begins, enabling true event-driven activation.

Voice activity detection (VAD)

Continuous detection of speech presence at the analog front-end, before the digital audio pipeline turns on. Enables smarter power management and tighter control of downstream processing during calls and standby modes.

Keyword spotting

Recognition of short voice commands running always-on below 1 µW. Supports natural, hands-free interaction without continuous digital overhead.

Context & environment

Acoustic scene classification

Real-time understanding of acoustic context, speech, traffic, indoor/outdoor environments, running continuously at ultra-low power. Enables adaptive system behavior without activating peak compute resources.

Acoustic event detection

Always-on detection of meaningful sound events such as alarms, knocks, impacts, or environmental cues. Only relevant events are forwarded to the digital system, reducing data movement and unnecessary compute.

System optimization

Assistance to denoising

Continuous VAD and scene awareness provide contextual information to the digital audio pipeline. The SoC can dynamically adapt filters and model parameters based on real-time conditions, while remaining inactive when no meaningful processing is required.

A full event-driven audio pipeline.

Pre-trained audio models

Pre-trained audio models are available in our SDK, trained on public datasets to accelerate evaluation and benchmarking of always-on audio use cases. Models can be adapted, combined and deployed through a hardware-aware workflow designed for rapid iteration.

From evaluation to deployment

Evaluate: Benchmark pre-trained or custom models using our silicon-calibrated SDK.

Validate: Measure always-on inference directly on the H1 evaluation kit.

Integrate: Connect via SPI/GPIO as a discrete IC or System-in-Package module.

Scale: Move to production with industrial-ready packaging and supply chain.

x10

Lower smart standby power

x5

Up to battery extension

1 uW

Offload of always-on pipeline on our 1 µW chip

Lw BOM

Lower tier, smaller battery or same with more functionalities

EVALUATION KIT

Try our

TECHNOLOGY

Evaluation board: Silk 1

Evaluation board: Silk 1

-

USB-C & BLE

-

STM Microcontroller onboard (STM32H755ZIT)

-

API for simple deployment

-

Arduino R1 connector for sensor expansion boards

The Silk 1 evaluation board enables direct validation of always-on inference below 1 µW on fabricated silicon. Measure power consumption, latency and wake-up behaviour in real-world conditions. Designed for rapid benchmarking and system integration testing.

What you can validate

-

Always-on inference power (measured on hardware)

-

Event-driven wake-up of the MCU

-

Latency from signal to event

-

Integration via SPI/GPIO

-

Arduino-compatible expansion header for custom sensor modules

USB-C & BLE

STM Microcontroller onboard (STM32H755ZIT)

API for simple deployment

Arduino R1 connector for sensor expansion boards

Expansion shield: Microphones

ONBOARD MICROPHONES

TDK ICS-40212

STM IMP232ABSU

Knowles MM20-33366

Knowles MM25-33663

TDK ICS-40212

STM IMP232ABSU

Knowles MM20-33366

Knowles MM25-33663

What you can do in under one hour

-

Select a pre-trained or custom model in the silicon-calibrated SDK

-

Run power and accuracy estimation (calibrated on measured silicon)

-

Deploy to the evaluation board and stream events over SPI

What’s included

-

H1 packaged silicon

-

Silk 1 evaluation board

-

Silicon-calibrated SDK

TECHNOLOGY

Silicon-calibrated SDK

Bringing analog neural networks into standard ML workflows.

The Neuronova SDK enables training, validation and deployment of temporal neural networks calibrated on measured silicon parameters.

Designed for rapid iteration and hardware-aware optimization.

What makes it different

PyTorch-compatible workflow

Power and accuracy estimation calibrated on real silicon

Hardware-aware training

Direct deployment to H1 silicon

For early adopters

Access to pre-trained always-on audio models

Early integration support

Feedback loop into next silicon revisions

The trillion-sensor shift

Audio is our entry point, not the final destination.

Over 46 billion sensors are shipped every year, microphones, motion sensors, vibration nodes, biosignal electrodes, pressure and environmental monitors, embedded across devices, infrastructure, vehicles, robotics and industrial systems.

On the path to trillions.

Every one of these sensors generates continuous analog signals.

Every one is interpreted through a digital pipeline.

And every digital pipeline carries a fixed always-on energy cost.

As AI scales, this cost compounds, not only at the edge, but across cloud and data center infrastructures that process the resulting data streams. Intelligence remains centralized, compute remains power-hungry, and the physical world remains under-interpreted.

%201.png)

The next era of computing will not be defined by more centralized processing.

It will be defined by intelligence moving directly into the sensing layer.

Our analog-to-Information™ enables a foundational compute layer below the digital power floor, purpose-built for sensors and scalable across sensing markets. From discrete integration to System-in-Package modules, this architecture transforms sensors from passive data sources into active, event-driven intelligent systems.

Designed and fabricated in Europe.

Built on mature CMOS.

Measured on silicon.

AI today is trapped in data centers.

We bring it to the physical, real world.

doing more with less

OUR TEAM

MEET THE PEOPLE

behind our Company

Advisory Board

doing more with less

PLANS

.png)

.png)